Introduction

BMW & autonomous driving - 95% of test kilometers is virtual

Autonomous cars are not only the domain of Tesla and Google, BMW is also trying to develop a self-driving vehicles. How do they develop it and what does a team of 1800 engineers use?

The first known experiment with self-driving cars took place in 1925 - just 40 years after the patent for the first automobile (the patent was filed by Karl Benz in 1885 and approved a year later). However, for more interesting results, it has been necessary to wait for the development of autonomous driving systems that started since the second half of the 20th century. In 2020, several companies are already testing self-driving cars with control systems in levels 4 and 5, anyway, in the mass testing, the top level is still level 3.

According to many, today the most advanced autonomous driving system is the system from Tesla Motors, anyway, such opinion may be affected by its great visibility, marketing and accessibility. Personally I would be interested in a comparison with the system from Waymo LLC owned by Google, unfortunately, most of the trusted articles are outdated - from 2018.

Both companies have a huge lead - especially thanks to years of testing and data from billions of autonomous miles traveled. Autonomous driving systems are not only the prerogative of companies such as Tesla or Google, this article will give you a glimpse under the hands of developers from development center for BMW self-driving cars working from campus in Unterschleißheim, Germany - small city 17 km north of Munich).

The principle of self-learning ... (with a little help)

The machine learning (AI) systems (under which autonomous control falls) are based on a set of functions created over the analysis of giant road traffic data. Nevertheless, self-driving car training can be compared to a person's learning process - the child starts perceive objects from birth and over the years is gradually able to recognize by mere observation what is the road, turn, pedestrian crossing, traffic lights, traffic signs and other attributes and what is their relation to the behavior of traffic in a given area. First in general, from the position of a spectator, later in detail by studying the traffic rules in a driving school.

If we simplify the driving principle ad absurdum, a self-steering system for cars must be able to:

- Flawless recognition of objects in the car's surroundings in an instant a few milliseconds

- Know the rules of the road and follow them

- Behave naturally, predictably, safely and responsibly

- Have an electronic equivalent of "common sense" (4th and 5th level systems)

Points 2-4 are more or less the essentials of a digital control model trained over a set of rules and a huge amount of input data from point 1, which is at the same time the most problematic. Cars move in an unprotected external environment, including a huge number of different objects of varying density, often changing weather conditions and anomalies in the behavior of the surrounding traffic. Production models must be prepared and properly tested for this all. Such amount of tests would be enormously expensive or would not outright feasible in the ordinary world, and this is where the opportunity for virtual space comes from.

95% of all test kilometers are performed in virtual space

During the development and testing of the 3rd level autonomous driving system, BMW intends to cover approximately 240 million kilometers with self-driving cars - the vast majority (95%) of this distance will be driven virtually - in a virtual space simulating the real world. This is not unusual, virtual testing is a very fast and efficient way to develop and validate functionality in a number of disciplines, for example common manufacture companies can tune production processes with teh use to general tool for virtual simulations Prespective. In the case of the development of autonomous driving system for BMW cars, the more specialized software is used, although it's still only a superstructure over the publicly available gaming engine Unity. The superstructure has been created and managed by 12-member team of developers around Nicholas Dunning and its purpose is to greatly simplify the development & testing process and reduce the number of tools that the 1800 developers working on AD for BMW have to use.

The tool has happend essential for the development and testing of autonomous control models in BMW, as it brings a number of benefits and deals with several problems:

- It converts data from sensors and control models into a 3D model that reflects the shape of the world, allowing developers to easily analyze model failures and behavior

- It enables the creation of partial and complete automated tests

- It allows developers to test very dangerous situations that are either problematic or even untestable to simulate in the ordinary world

- It allows developers to set the environment - the size of traffic, types of vehicles, traffic signs, types of roads lanes and lane boundaries, pedestrians, visibility (phase of the day (according to the hour), rain, fog ...)

- It enables train and test autonomous driving system in a virtual space, simultaneously in several types of weather and traffic conditions

- Based on the measurement of the distance between the controlled car and obstacles, it detects dangerous situations and reports them to developers

- It allows developers to set "breakpoints" and thus easily check data at critical moments of the analyzed situation

- It allows developers to plan the trajectory of the vehicle

- It allows continuous independent testing and training (24/7/365 mode).

Development process and automated tests

In the same tool as in the video above, developers can create a series of automated tests, either from the scratch, or by replicating some interesting situation that occurred during a test of a self-driving car in the real world. There are 2 types of tests:

- Small-scale feature tests - Quick behavior tests in individual situations (up to 1 minute in length)

- Large-scale system tests - Test of wider behavior of the AD system on the route between points A and B (for example between 2 German cities)

Managing of source code for autonomous driving system is similar to the development of any other software products. Each engineer / team is responsible for a certain portion of the system. All changes are processed and tested locally first and pushed to the main development branch only once the team is satisfied with the results.

Functionality tests to verify the integrity of the behavior are performed after each adjustment, simultaneously in different light and weather conditions.

Except for the weather (day, night, rain, fog, snow, ice), developers can modify every test by changing type of traffic, traffic signs, lanes, adjust the existence of pedestrians and other attributes.

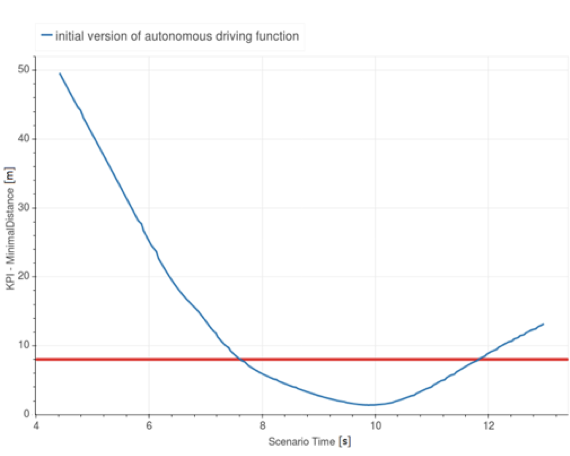

The evaluation of the tests is based mainly on the achieved distances between the self-driving vehicle and obstacles and on the data on the rate of change of movement of the autonomous vehicle. The distance measurement function with evaluation in a 2D graph can be activated in the lower right corner of the editor. See the chart below for an example of the output and its evaluation.

Graph of the distance (Y axis) between an autonomous vehicle and an obstacle in a time (X axis). Changing the speed too quickly (if not necessary) or reaching a distance between the vehicle and obstacle lower than marked with the red line is considered as a failure.

Graph of the distance (Y axis) between an autonomous vehicle and an obstacle in a time (X axis). Changing the speed too quickly (if not necessary) or reaching a distance between the vehicle and obstacle lower than marked with the red line is considered as a failure.

Failure situations, together with situations in which the model behaved differently than expected, are reported back to developers during tests who do evaluation and remediation of the behaviour in these situations (analyze, find problem, fix it, do tests, verify that all made changes went to positive results, pushing to main branch).

Testing critical situations

The biggest challenge is to find a solution of critical situations. It are e.g. cases when an obstacle becomes to be noticeable at a very late stage due to its discovery by a move of another participant in the traffic. A pedestrian who is running from behind a bus, a car driver who does not respect rules and laws or a stationary car that is not visible until another car (ldriving before the self-driving car) reveals it by a quick evasive maneuver - all these situations are recorded in the video below. Not to mention ethical issues.